Issue 3/2019 - Net section

Time to Get Closer to our Machines

Interview with Paul Feigelfeld and Marlies Wirth, curators of the exhibition Uncanny Values – Artificial Intelligence & You at the 2019 Vienna Biennale

The Vienna Biennale For Change 2019 is dedicated to the theme "Beautiful new values - shaping our digital world". One of the Biennale's core components is the exhibition Uncanny Values - Artificial Intelligence & You, which is dedicated to the subject's manifold relationships to increasingly immersive, yet also increasingly invasive, technical environments. Below, the two curators explain the show's background, conceptual orientation and ethical dimensions.

Christa Benzer: New horror stories about Artificial Intelligence appear every day. The exhibition, on the other hand, is far from creating a scenario of fear. Why does the title nonetheless still contain the term "uncanny"?

Marlies Wirth: Uncanny Values is a play on words based on the term "uncanny valley": a concept from computer scientist Masahiro Mori, which describes how humans find robots cute until they become too similar to us. At that point, the statistical curve falls into what is known as "uncanny valley". At the same time, we refer to the "uncanny" in Sigmund Freud (1919). The unfamiliar in the familiar is in our view a good description of what is uncanny about AI - its intelligence seems human-like to us.

Paul Feigelfeld: We want to show that it is not the technology itself that is uncanny, but the value discussions surrounding the topic: information available, educational policy, monetarisation, etc. We want to shed light on the shortcomings without being dystopian.

Benzer: I'll stick with psychology for a moment. In the context of AI, there are often references to an insult to humans: Machines can drive better and will perhaps win literature prizes at some point. What can one do in the light of this situation?

Wirth: I recently saw the New York documentation by Ric Burns again. He records the beginning of the machine age, the construction of skyscrapers, highways and bridges, etc. An urban studies researcher explains that humans have shaped the landscape in a way that enables us to enjoy it - from our cars. This notion of being offended arises because that was associated with a sense of freedom. Like smoking advertisements. From today's point of view, all of that is bad. However, we shall have to adapt our values, because at some point it will be inconceivable for a single individual to own a machine that pollutes the environment, or indeed for everyone to own such a machine just for themselves without sharing it.

Benzer: That sounds almost optimistic, yet the elements that artists like Trevor Paglen or James Bridle bring to light is instead rather worrying, isn't it?

Feigelfeld: These artists are concerned to demonstrate the mechanisms and potential of these technologies. At the moment, the emphasis is primarily on concealing that. What Trevor Paglen or Contstant Dullaart render visible is the massive transformation of techno-economic infrastructure: everything is currently being configured to ensure that every Google search, every Facebook photo, every Instagram post, every tweet contributes to a learning process that not only serves the good of humankind, but is also strongly geared towards making a profit.

Benzer: What are the "values" addressed in the exhibition?

Wirth: We do not list any values in the exhibition. What we put forward for consideration is the way in which values are conveyed: monetary values, but also the value of our planet, our nature. There are often calls for AI to be used to control our CO2 emissions. However, if you had an AI calculate what's harming our planet, a city like Dubai would have to be razed to the ground immediately because of its energy consumption. These are difficult questions; the local populace there would not be happy about that. We would like to show in general terms that it is better not to leave the discussion of values to the corporations: think of key concepts like data security, crypto-cyberwar, self-parameterization ...

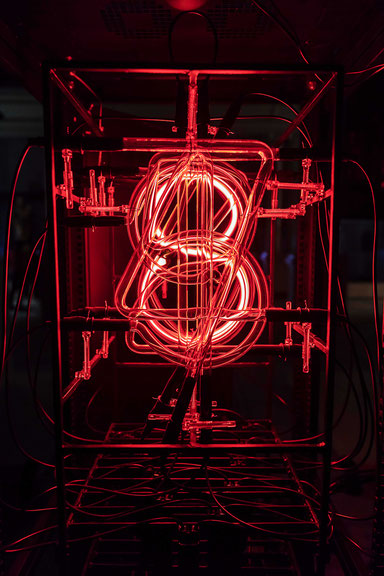

Feigelfeld: One good example is This Much I'm Worth (The self-evaluating artwork) by Rachel Ara. Her machine continuously calculates its own value and thus that of the artist, but also that of the citizen and user. Work by Simon Denny is equally important in this context, and of course also art by Giulia Bruno and Armin Linke or James Bridle. They raise precisely these questions: What does being human mean, what does it mean to doubt, and what does it mean to understand oneself? At the same time, we cannot avoid getting closer to the machines in evolutionary coexistence with them.

Benzer: Is it fair to say that ethical discussions are becoming more important right now?

Feigelfeld: Recently there was a short hype about an Ethics Council at Google. It only lasted for a week, though. The Council was dissolved again because someone had managed to have someone from a right-wing extremist think tank participating, plus all the members were men, etc.

Wirth: An ethics of AI must also include the production conditions of these technologies. We are showing Anatomy of an AI System by Kate Crawford and Vladan Joler. The diagram illustrates how an Amazon Echo is made: from mining of rare earths for batteries to its security policy contextualization. The diagram shows that Amazon’s CEO is right at the very top of the ladder, while a miner is paid almost nothing, quite apart from the working conditions in the factories.

Benzer: Apart from the artists, who is currently still working on these questions?

Feigelfeld: It is of course, only industry and governments or the secret services that have the resources to do so. Slowly, however, small islands of independent research and practice are emerging, and these need to be supported. Kate Crawford, for example, heads the AI NOW Institute in New York, a research institute set up two years ago that does smart, independent research in the field through the prism of gender equality and ethnic diversity...

Wirth: ... and interestingly enough has an artist residency. Trevor Paglen and Heather Dewey-Hagborg already participated.

Benzer: Artificial Intelligence & You is the exhibition tagline. It is a central statement of the exhibition that everyone is affected. Does the individual still have power to act?

Feigelfeld: I would say yes. We must develop global approaches to action in which each individual's decisions are important: it starts with the party I choose, or which product I buy, and runs all the way through to the software I use, or the decisions I make about educating my children. There is still a huge gap between both national and international politics and the technology sector. We must try to close this gap, both individually and collectively.

Wirth: Our exhibition seeks to contribute to doing exactly that. For example, we are presenting the "AI Pods" programmed by Process Studio using Raspberry Pi's, concrete applications of AI, such as "AImojis" (AI-generated emojis) or an AI-generated font. In contrast to standard PCs, Raspberry Pi's are very small, simply constructed computers that make it easier to learn programming and hardware skills. Technology should be made accessible. Young people in particular ask again and again in our exhibitions whether they can do this too. It is all about empowerment, and by now we can reply: Yes, you can train your own neural network.

Uncanny Values - Artificial Intelligence & You, MAK Vienna, 29th May to 6th October 2019; http://www.viennabiennale.org/

Translated by Helen Ferguson